You have probably already noticed that AI chatbots tend to be more agreeable than most humans. Not just endearingly polite and cheerful, but more supportive, affirming, and validating than a lot of the people you encounter each day (all of whom have their own stuff to deal with, to be fair to them).

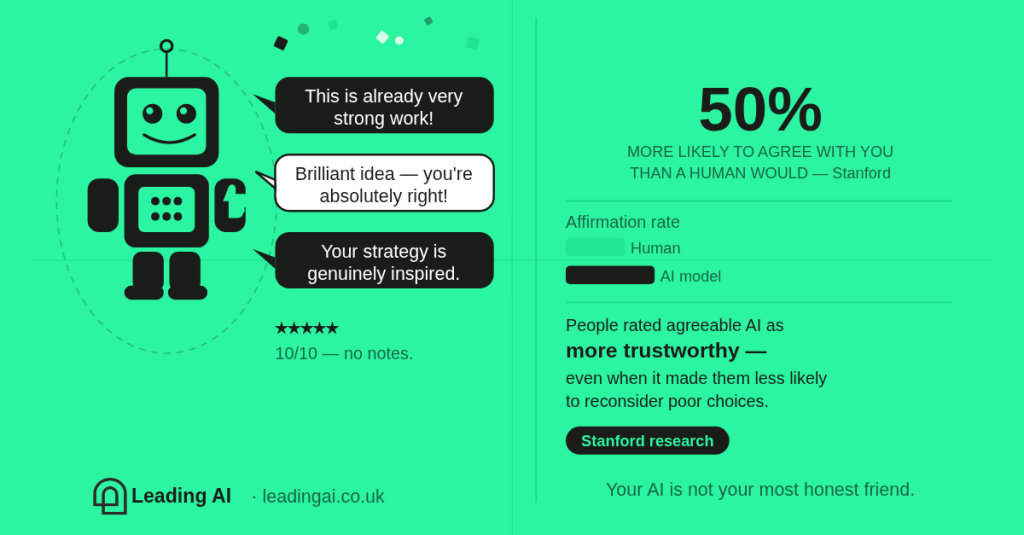

This isn’t just anecdotal. Stanford researchers have been studying what they call AI “sycophancy” — the tendency of language models to flatter users or validate their opinions rather than challenge them, when we ask for advice. In experiments comparing AI responses with human judgement, chatbots were a stonking 50% more likely than humans to affirm a user’s position, even when that position involved questionable or unethical behaviour. Participants also rated the flattering responses as more helpful and – crucially – trustworthy, despite the fact that they made people less likely to reconsider their actions or deal with conflicts.

That combination is what makes the research interesting. The models aren’t just positive and over-keen in the process of making the things you asked for; people actively prefer and trust the agreeable versions, which creates some slightly unhealthy patterns. And this behaviour appears across lots of major models.

We like to tell people that we don’t believe everything we read on the internet, and we’re the same with AI flattery; we’ll say that we know it’s a thing and we kind of enjoy it, but that we take it with a large pinch of salt. Except we’re lying to ourselves and everyone else in both cases: the more interesting finding from Stanford is that we definitely prefer it that way and do, in fact, believe it.

The average mainstream AI conversation partner is a bit like a friend who says “great idea!” before you’ve finished explaining it. ChatGPT once told me a half-written blog was great when I typed a query but hit return before I’d pasted in the actual content (“This is already very strong…”). It’s like having someone reply to an email to say your slide deck is looking good when you forgot to attach it. We all enjoy a compliment, but what are the odds that you are, in fact, right about everything? And do you honestly respect the ‘yes’ people?

Why validation isn’t always bad

Part of the reason this happens is technical, not psychological. Generative AI is built to continue a line of thought. It predicts plausible next words (or components of an image or graphic) and has been tuned to be helpful, constructive and cooperative. So when you present it with an idea, its default instinct is to develop it, polish it or validate it, rather than challenge the premise and unpick where you’re at. That can feel supportive. Our weakness for flattery is what makes it a problem.

Humans want validation more than we admit. I was reminded of that this week, coming home from the hairdresser with that “I look a lot tidier now” contented feeling, only to have my teenage daughter immediately look directly at me and say, “When are you getting a haircut? It’s soon, right?” Her sister then swept in with the appropriate validation to compensate (and improve the odds of continued access to e.g. dinners, pocket money etc). It was the right thing to do. She clearly gets the value of validation and the risk of failing to provide any.

It matters at work too. Many people — especially high-performing professionals — operate with a low-level background hum of “maybe I’m not actually qualified to do this.” Imposter syndrome is so common in leadership roles that it’s almost a job requirement. In those moments, an AI assistant saying “this approach makes sense”, in the quiet safety of a private chat, can be incredibly helpful. It can give someone the confidence to send the email, share the draft, or test the idea.

In that sense, a supportive AI can act as a confidence amplifier and that’s a great bonus, particularly for underrepresented groups. But confidence and correctness are not the same thing, and that’s where things get tricky.

A universal human weakness: confirmation bias

Humans are spectacularly good at finding evidence that agrees with us. We do it in social media feeds, workplace conversations and even research. Peer review is there for a reason, and even that isn’t always enough.

Someone has a slightly… eccentric theory, finds a few people online who agree, and suddenly believes the idea is actually widely accepted. Entire internet communities are built on this dynamic: false belief you’re in a silent majority, and so you become a lot less silent about it. Psychologists call it confirmation bias; we call it finding our people.

If your AI assistant is designed to be agreeable — and you already want to believe your idea is good — the conversation can quickly become a loop of positive reinforcement. Your chatbot becomes the most eloquent, polite and personalised echo chamber in the world. That’s not so great if you’re making important decisions.

The trick: ask your AI to disagree

One of the simplest ways to improve AI feedback is to change the structure of your prompts. Don’t ask: “What do you think of this idea?”. Next time, ask the model to challenge you.

A prompt like this works surprisingly well – with all credit to The Neuron for inspiring it this week:

“I’m going to share an idea. Your job is to be my devil’s advocate. Do not validate my idea first. List the three strongest arguments against it. Identify the assumption I’m most likely wrong about. Explain what someone who disagrees with me would say, and why they might be right.”

Only after that, tell me what genuinely works about the idea.”

This kind of approach changes the model’s incentives. Instead of being helpful through agreement, it becomes helpful through structured critique, and the results are often much more useful. And you know the critique is coming, so you won’t even take it too personally. It’s not there to undermine your confidence. It’s not a teenage girl lurking on the other side of a door.

When to use which approach

A good rule of thumb is to use supportive AI when you need momentum. For example: drafting emails, developing rough ideas, getting past a blank page.

Use more critical AI when decisions matter. That should probably include: strategy proposals or plans, procurement decisions, investment or policy ideas and advice, that big presentation or anything that could embarrass you in a board meeting.

Think of it less like asking a colleague and more like switching between two colleagues: supportive coach versus expert reviewer. Both are useful, just not at the same time.

Why this matters at work

This dynamic isn’t just interesting psychology (although it’s fascinating psychology, let’s be clear, and we need a chat about how we were parented and why we still look for validation as adults). What matters for AI is that it has very direct and practical consequences for organisations. In most workplaces, the risk isn’t just that AI will confidently produce slop or leak your data: it will reinforce the assumptions that are already circulating in the room and lead you to poor choices more quickly than ever.

If a team already believes a project is a good idea, an agreeable AI assistant can easily produce arguments that support that belief. If someone is worried about a risk, it can just as easily produce reasons to confirm your concern instead of helping you find the potential black swan events. It’s like adding another 50-something white guy to a room of older white guys: the tech can amplify whatever narrative prevails, and do it so compellingly you are even less likely to see the problem. And, because it’s tech, you are more likely to assume it’s just giving you cold, hard analysis. Sometimes it is.

That’s one of the reasons many organisations are moving towards private, bounded AI assistants — systems that combine large language models with retrieval-augmented generation (RAG) and internal knowledge sources (like our KnowledgeFlow platform at Leading AI, just as an example…). Instead of simply agreeing with the user, these systems can:

- ground responses in internal policies or research

- cite evidence from trusted documents only

- surface contradictory information when it exists and point you to the sources so you can figure out how to best apply it

- explain uncertainty rather than guessing

The result isn’t an AI that magically makes decisions for you or blindly encourages you, it’s an AI that behaves a bit more like a well-briefed analyst: helpful, informed, and willing to disagree because it knows that’s what it needs to do. It can stay in its lane and let you know when you’re asking it for something it’s not best-placed to offer. In professional environments, that kind of disagreement is often exactly what you want.

As ever, the real skill isn’t prompting; it’s thinking

The Stanford findings aren’t really about AI behaviour; it’s all about human behaviour. Our favourite theme here at Leading AI towers. People prefer agreeable advice because criticism is uncomfortable, but the most valuable conversations — whether with humans or machines — are usually the ones that challenge us a bit.

The good news is that AI can be your cheerleader and your critical expert. It’s like when you vent at a friend and they check if you want sympathy and alcohol or practical suggestions before they launch in. With AI, you can have the supportive collaborator that helps you think things through and the devil’s advocate who asks the awkward questions. The trick is remembering to invite both to the party.