My husband recently sent me this link: I quit ChatGPT — here’s how I moved everything to Claude and Gemini without losing my data (or my mind). The article lays out, step by step, how the writer decided to leave ChatGPT behind and switch to Claude – a clean break, but one that comes with, it turns out, a pretty decent baggage allowance.

My husband sent the article (on my birthday, incidentally) with no commentary at all, but that’s fairly standard. He’s not much of a talker, but I know this is his equivalent of a kind but firm intervention. Fortunately, I know he meant it as a very literal explainer of what to do, and a nudge to crack on and do it – not as a deeper metaphor or hint at a fresh start. There’s very little subtext to his text messages, as a rule.

And I have form here, as he well knows. I like to make him go first with tricky things when it’s something we both have to do. When we had laser eye surgery, many years ago, I made him go first to see what it was like. Not because I’m not super brave — obviously — but because it felt sensible to observe the experience before committing my own eyeballs to lasers.

The tipping point

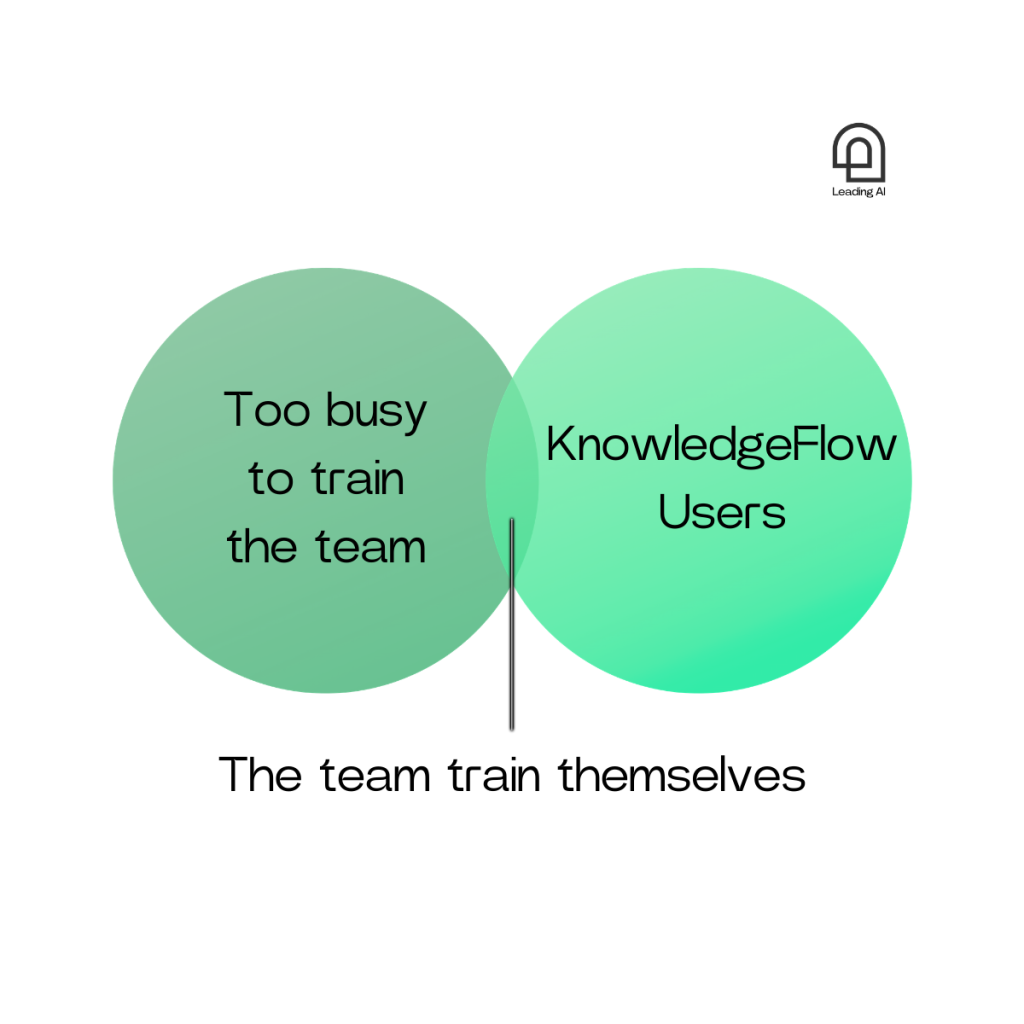

With some of the AI that I use outside of our awesome in‑house Knowledge Flow, I can feel myself lazily drifting toward default tools without really considering the alternatives, while not quite feeling comfortable about letting that happen completely. I think a lot of us are in the same place: slowly expanding the scope of our conversations with ChatGPT, but also wondering if we really should.

Everyone knows the landscape is shifting quickly. Every week seems to bring a new model release or a LinkedIn post confidently declaring that the entire industry has just been transformed. Most of us, though, still reach for the same tool we started with. And for a lot of people, that tool is ChatGPT. Which raises an interesting question.

Should we?

The thinking person’s AI partner

There are some uncomfortable reasons not to make public ChatGPT your personal or organisational default. Independent tests keep finding that a substantial share of AI answers, including from ChatGPT, are simply wrong – a recent BBC‑coordinated study found significant issues in around 45% of AI answers on news topics across major assistants – and those mistakes are often delivered with total confidence.

At the same time, OpenAI is starting to test ads in and around the ChatGPT interface for some users, which blurs the psychological line between neutral advice and sponsored content in a space where you only see one main answer, even though the company says ads are clearly labelled and do not influence the model’s responses. Critics from across the political spectrum have highlighted that large language models can both replicate harmful social biases and appear to lean in particular ideological directions, which is problematic if you want to lean into neutrality or build an inclusive workplace.

Add to that wider concerns about privacy, training data, and the fact that all the major vendors sit inside a fast‑moving and fragmented US regulatory environment – alongside growing interest from US government and defence customers in closer partnerships with major model providers – and you have a perfectly rational case for saying: use ChatGPT, sure, but don’t build your most sensitive or mission‑critical workflows on top of the public version. Maybe don’t pour your heart and soul and mind into it.

There’s also a very real International Women’s Day angle here. In a lot of teams, women already do a disproportionate share of the invisible work: smoothing relationships, mentoring juniors, drafting the careful email or briefing that keeps everything on track. That’s “give to gain” at its best when it’s recognised and rewarded – but when we pour that judgement and emotional labour into public tools like ChatGPT, the benefit is captured by the platform, not by us or our organisations. Being more deliberate about where we use AI, and favouring trusted or private spaces for our best thinking, is one small, practical way to make sure that the value of that work actually flows back to the people and communities who are doing it, rather than disappearing into someone else’s model.

What are my options?

For most of what we do with the “big” models – drafting an email, summarising a report, working up a plan – only a handful of tools really matter. They’re essentially your thinking partners: language models that help you work with information and write things better and/or faster.

For most organisations, the realistic choices fall into four camps.

- Public “general purpose” tools (ChatGPT, Claude, etc.)

These are the ones people start with at home: ChatGPT in a browser, Claude in a separate tab. They’re very capable now – since GPT‑4.1 and Claude 3.5 Sonnet, they’ve been able to handle long documents, multi‑step reasoning and high‑quality drafting – far better than earlier versions.

The upside is convenience and quality. The downside is that most consumer accounts don’t give you strong guarantees on data residency, retention and access, and it’s hard to enforce organisational guardrails on “whatever people log into on their phones, out of habit”.

- Microsoft Copilot (or, now and then, Gemini) inside your digital environment

Copilot is increasingly “just there” – in Word, Excel, PowerPoint, Outlook and Teams – and for many staff it will be their first real AI assistant. It works where people already are (email, documents, meetings, chats) and it sits inside your Microsoft 365 tenant, respecting existing permissions, sensitivity labels and DLP policies.

That’s why your IT team are asking you to use it, even though it feels clunky compared to ChatGPT on your phone. It can use documents, emails and chats you already have access to, without shipping data outside your Microsoft cloud, and it’s governed by your existing identity, logging and compliance stack. It’s not usually the frontier model you’d pick if you were optimising purely for raw quality or efficiency – but for basic day‑to‑day tasks, it’ll do.

- Enterprise plans for big frontier models

OpenAI, Anthropic and others now offer enterprise or “for business” plans as well as premium consumer versions, with stronger privacy commitments, admin controls and SLAs. These give you access to their best models (e.g. GPT‑5.4) with higher rate limits and often lower effective costs at scale, plus contractual guarantees that your prompts and data aren’t used to train public models, and options for data retention and regional hosting.

It’s a good middle ground if you want the latest models and better governance than public accounts, but don’t yet need your own fully customised flock of private bots. You do, however, need to be a savvy shopper and contract manager to get the right thing at the right price.

- Private or organisation‑specific assistants

The final category is what you might call “private AI”: an assistant in your own environment, grounded on your own documents, policies and data. Under the bonnet, these kinds of tools (ours included) usually use one of the major models (OpenAI, Anthropic, others) but run within your cloud or virtual private cloud, with your own access controls, logging and monitoring, and with explicit rules about what’s indexed, how it’s updated and the kind of response you get back.

That’s the space that companies like ours work in: taking strong underlying models and wrapping them in the right context, security and user experience for a particular organisation. Done well, these can be surprisingly affordable, especially for focused use cases (for example, an internal “policy buddy”), because you’re not paying for a huge, open‑ended platform; you’re paying for a scoped assistant with clear value.

What really matters

When people compare AI tools they often focus on which model is technically “best”. In practice, three things matter more (imho).

- How easily you can access the tool. This explains why Copilot is gaining traction, and why Bing is a surprisingly well‑used search engine: people use what’s in front of them. If you want people to use something else, it needs to be front and centre.

- How you want to handle data. Your data governance team already have a view on this; you need to work out how it aligns with your actual ways of working, and choose tools that sit in the overlap between “practical for you” and “acceptable for your data lead”.

- What the model feels like to work with. We like things to just work and feel intuitive – which also goes some way to explaining why ChatGPT is the king of positive reinforcement. “Thanks, ChatGPT, that blog topic was inspired. Good job to you too!”

Which brings us back to where we started. Can I quit? Should I quit?

Yes, and that’s up to you.

We know we’re bad at switching banks and utility companies even when it’s in our best interests, but we also know the new provider will be keen to make it easy for us once we reach out to them – and this is no different.

Switching AI models is shockingly easy and – because this is precisely the challenge new users are grappling with – Claude will even tell you what you need to do, and the prompts you need to use, to make it seamless. Then your new AI will work in pretty much the same way as your old one, using your imported chat history: you type a prompt, paste in documents, ask follow‑up questions. Same old, same old. You won’t miss ChatGPT. Same with switching to Gemini, if that’s where you want to go. I’m not here to dictate.

The honest answer is: whatever model you use, you’re doing the right thing as soon as you move from unthinking use of whatever’s on your phone or laptop, to a deliberate, explainable choice about where and how you or your organisation use AI.

In the end, most organisations who reap the rewards of AI don’t settle on a single AI tool. Instead they end up with a mix of things. I asked around our team and, at the time of writing the list includes Claude for coding, ChatGPT for drafting, Gamma for slide decks, Canva for graphics, Perplexity for finding public information, and our private tools for everything that really matters to us, piggy‑backing on those big frontier solutions.

You do you.