No one likes to admit they’re doing pointless busy-work.

I used to visit schools most weeks. For context: I worked in UK education policy but wasn’t educated here, so I had some catching up to do. I wasn’t just lurking. On one visit, accompanied by a retired school inspector, I walked into a classroom where a group of pupils were making posters about a topic they had covered earlier that term. There were felt tips, carefully ruled headings, bullet points in different colours. It looked impressive. Everyone was busy. The walls would soon display evidence of productive learning.

My colleague wasn’t so easily fooled, and I learned a new phrase on the train home: “pointless busy-work”. He pointed out that the pupils weren’t grappling with anything new. They weren’t testing a tricky idea or stretching their understanding. They were producing something that looked like learning. It may have consolidated a bit of knowledge, but it was safe. Visible and reassuring. Since then, I’ve noticed how often tasks like this appear when there’s a substitute teacher in — the modern-day equivalent of wheeling in the big telly and knowing you’re about to watch a BBC costume drama made in the 70s.

It strikes me that adults do this too.

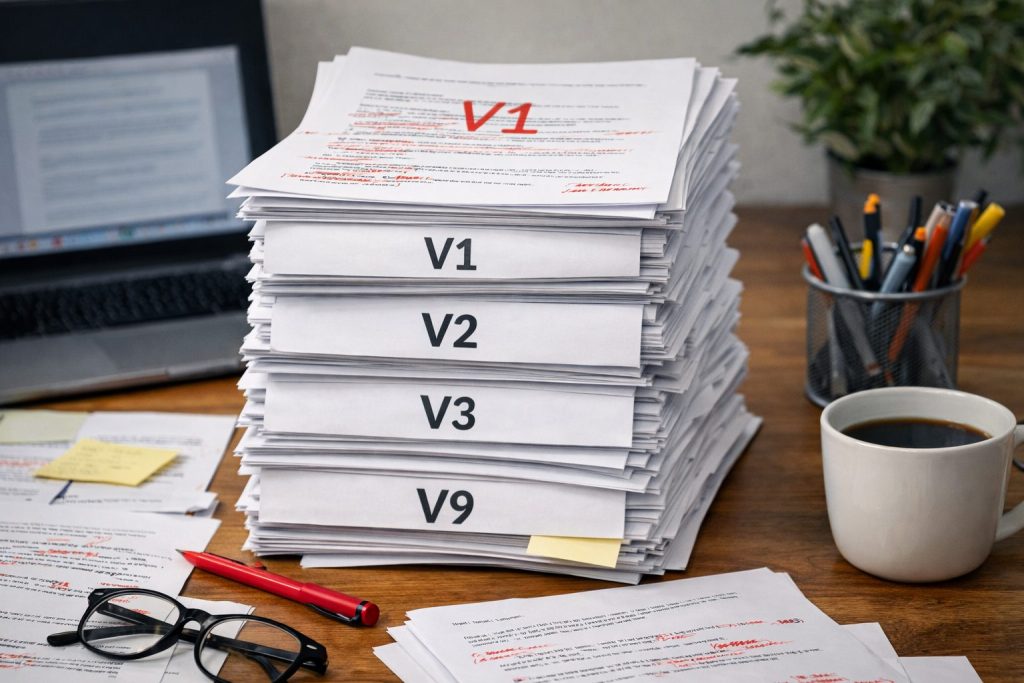

We produce slide decks that summarise the previous deck. We write strategy papers that refine the earlier strategy paper. We generate output that signals progress, even when the underlying thinking hasn’t moved very far. We are very good at performative productivity.

I thought of that classroom when I read the recent Harvard Business Review argument that AI doesn’t reduce work, it intensifies it.

The claim feels right. People draft faster, so expectations rise. Turnaround times shrink and more versions circulate. What used to take half a day now takes an hour, and instead of reclaiming that time, we fill it — because we are (mostly) grafters and can’t help ourselves. The backlog doesn’t disappear; it multiplies.

The more interesting question for me is not whether AI makes us busier. It’s what kind of work is being intensified.

When output gets cheaper

There is a growing body of research on “cognitive offloading”, the tendency to use tools to reduce mental effort. You can read more here and in many, many papers published since.

We have always done it: we write lists, use calculators, rely on search engines. These tools free up capacity, but they also change how deeply we engage with information.

More recent studies looking at the use of large language models in writing and problem-solving tasks suggest that heavy reliance can reduce recall and limit deeper processing unless users deliberately reflect and review.

The tools are helpful, but they can quietly displace effort — and, crucially, thinking.

None of this means AI makes people less intelligent. It does suggest, though, that if we consistently outsource the thinky bits of our work — drafting, structuring, synthesising all those ideas everyone called out in that workshop — we might not be exercising the muscles that make us good at judgement and challenge. Skills improve through use: if the tool always goes first, independent thought can slip into second place.

Now layer that onto economics: generative AI has driven the marginal cost of producing competent text close to zero. When something becomes cheaper (think ‘single use plastics’ if you’re not convinced), we rarely produce less of it; we usually produce more. In work, that means more briefings, more internal updates, more “content”. Most of it is technically fine — clear enough, polished enough. But not all of it needs to exist to be serviced by data centres and reviewed by the poor human on the hook for approvals.

This is where everyone’s concerns about “AI slop” begin to make sense.

If we lower the cost of production without raising the bar for necessity or quality, we risk flooding systems with low-value material that then demands human review, correction and governance. The time saved in drafting can easily be reabsorbed in checking, editing and managing downstream consequences.

At its worst, this is the adult version of making that poster: visible effort, minimal intellectual stretch.

Volume or depth?

Except I don’t think that’s the whole story.

Slop is certainly being churned out, but in both private and non-profit organisations, we’ve seen something much more hopeful. We’ve seen AI remove genuine friction: a team member who no longer spends 30 minutes hunting for a clause in a dense document can spend that time thinking properly about how it applies to a complex situation. A charity worker who produces a first draft of a funding bid in ten minutes can invest real energy in refining the argument and tailoring it to the funder’s priorities. A housing officer who is not rushed for time can respond with more nuance to an individual tenant’s actual circumstances.

Yes, work is intensified, but the intensity can shift upwards. Less time is spent on searching and repetition; more time is spent on judgement and tailoring. That is not pointless busyness; it is enrichment. In social care, this has long been called “reflective practice”; the difference now is that there is at least a fighting chance of making time and headspace to finally do it.

Maybe the real dividing line is not between “AI reduces work” and “AI intensifies work”, but between intensifying volume and intensifying thinking.

Think first, prompt second

The answer isn’t to ban the tools or pretend we can rewind to 2022 — back when we were still arguing about hybrid working and posting screenshots of AI-generated limericks like we’d discovered fire. The answer is to use in a way that doesn’t hollow out the work that matters. One simple rule to follow is this: think first, prompt second.

Before opening the window to your tool of choice*, decide what you actually think is needed. Jot down the three points you’d make if someone stopped you in the corridor and asked. It doesn’t need to be elegant; the point is that you’ve done the mental lifting before a machine steps in.

“Cognitive offloading” is a polite way for psychologists to say we hand work to machines. Frankly, there’s nothing wrong with that. But the effortful bit — wrestling with an idea — is what builds understanding. If the AI always goes first, you never quite make an internal map. Your Mind Palace has no corridors to walk along.

Another solution is to think of AI as a critic rather than a ghostwriter. Instead of asking it to produce the answer, ask it what’s wrong with yours. Ask it what assumptions you’re making. Ask it what a sceptical reader or a particular flavour of expert would challenge. That is much harder to outsource, and much more valuable.

If, as a manager, you reward volume, you will get volume. If you praise speed above all else, you will get speed. But if you ask people to explain their reasoning, or to justify why something needed to be written at all, the quality bar stays high.

You can also resist the reflex to fill every efficiency gain with more output. If AI saves 30 minutes, it does not automatically follow that you must produce another document in those 30 minutes. (I am mainly writing this here to remind myself.) Use the time to think more carefully about the tricky stuff, or to have a proper conversation with a colleague, or to test whether the thing you are about to send to that endless cc list is necessary.

The risk is not that we become lazy. It is that we become extremely efficient at producing work that looks impressive and changes very little. The opportunity is that we might finally stop making posters — and start using the time we save to think.

* I’d obviously recommend Leading AI’s Knowledge Flow: your front door to a whole host of secure, reliable assistants that encourage curiosity. But I recognise you might also dip into a few other solutions. I’ve got Perplexity and Gamma open on other tabs right now, so I’ll not judge.