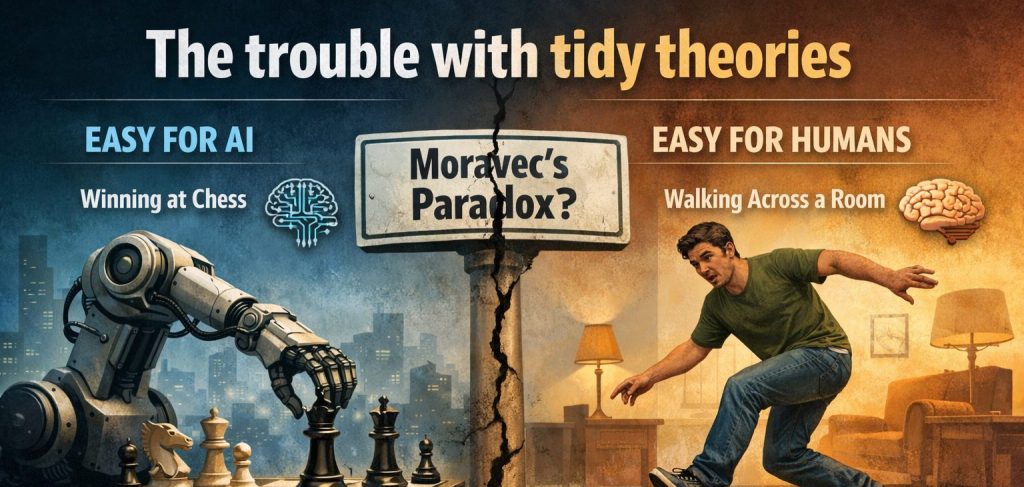

There’s an idea that’s been doing the rounds in AI discussions for years. It goes something like this: tasks that are hard for humans are easy for AI, and tasks that are easy for humans are hard for AI. So sayeth the thought leaders.

When people want to sound really credible, the idea is given a name: Moravec’s paradox. It’s become a kind of assumed truth when people talk about AI. Chess? Easy for machines; harder for us. Walking across a room? Tricky for a bot, easy for us. You probably nodded when you read that. Feels right, right?

There’s just one problem.

As Arvind Narayanan has pointed out recently, Moravec’s paradox was never properly tested. It emerged from a narrow set of examples. Proponents of the paradox focused on a small number of tasks where humans and machines diverged in interesting ways, quietly ignoring the thousands of tasks that are easy for both, or hard for both.*

And we call that a selection effect — a well-evidenced phenomenon across a whole host of populations and situations. Like confirmation bias, we tend to see what we want to see.

What’s impressive is how long the theory has survived confrontation with reality. The breakthroughs in computer vision around 2012 — when neural networks suddenly leapt forward on tasks like image recognition — directly contradicted the idea that perception and pattern recognition would remain peculiarly difficult for machines.

Why tidy theories are so irresistible

Neat theories are seductive because they reduce complexity. They give us something solid to hold onto when systems are moving faster than we can comfortably keep up with. As our world becomes more complex and it gets harder to manage the ripples from every choice we make, simplicity can feel more appealing than ever.

Tidy theories also do something more subtle, and sometimes more dangerous: they give us permission to stop thinking.

A really good tidy theory draws a clean line through a mess. It tells us where we stand, what matters, and what we can safely ignore. In the case of Moravec’s paradox, it reassured us that whatever else AI might become, there would always be a clear dividing line between “machine intelligence” and “human intelligence”. It allows us a contented little sigh — and then we move on.

That reassurance turns out to be expensive. But before we get back to AI, it’s worth looking at another example of a theory that spread not because it was well-evidenced, but because it felt right.

You’ll know this one.

Myers-Briggs and the comfort of type casting

The Myers-Briggs Type Indicator didn’t start out as a scam. Katharine Cook Briggs and Isabel Briggs Myers were deeply sincere in their attempt to translate Carl Jung’s ideas into something practical and accessible. The appeal is obvious: four neat dimensions, sixteen types, and the sense that you can finally explain why you are the way you are — and why other people are so different.**

Sadly, decades of psychological research haven’t been kind to Myers-Briggs as a predictive tool. Test–retest reliability is weak (people often get different “types” at different times), and there’s little evidence that the categories meaningfully predict behaviour, performance, or compatibility. Most personality traits, it turns out, exist on continua, not in boxes.

You already know this on some level. You’ve developed habits through experience that become your behaviours, whatever your starting point when you were a fresh-faced graduate being assessed as an INTP and worrying whether that might not be the best “type” for making new friends.

And yet Myers-Briggs is remarkably sticky in a lot of workplaces, including in hiring and leadership development. In some countries, it’s become part of everyday identity. In South Korea, knowing your MBTI type is common social shorthand even outside work — a quick way of explaining yourself to others.

When everyone knows their “type”, the risk is that curiosity is replaced with assumption. We stop asking how people behave in specific contexts, over time, under pressure. We type-cast ourselves and others, and forget how much we can learn and change.

Not all generalisations are nonsense

At this point, I should be clear about something. The problem isn’t generalisation per se — which we rely on to move at any kind of pace without overthinking and triple-checking every tiny thing. The problem is unsupported generalisation, especially when it’s used unconsciously.

Some ideas hold up across different spaces and over time because they’re grounded in observation rather than wishful thinking. Perplexity and I have made a top three work-related rules that are more robust:

Jevons paradox

Improvements in efficiency don’t necessarily reduce overall resource use — they often increase it, because cheaper or easier consumption drives demand. I would refer readers to the increase in both the number and length of emails in the age of genAI. Nuff said.

Goodhart’s Law

When a measure becomes a target, it ceases to be a good measure. This shows up everywhere. The right target can usefully draw attention and energy to help solve a problem, but bad targets can drive weird behaviours that don’t really get you what you wanted. Do we wish fewer children had to be referred to social care teams? Yes. Do we want to incentivise people not to refer a child if they’re worried about them? Hell no.

Regression to the mean

The fundamentally boring statistical reality that explains why extreme performance (good or bad) is so often followed by something more ordinary. It’s one of the most robust findings in behavioural science, and one of the most consistently ignored — especially when we’d rather believe in lucky streaks or genius interventions.

My dad (a statistician) explained it to me when I was about nine, to help me understand why he was very tall relative to everyone else in our family, but I was nonetheless destined for a lifetime of asking people to reach things down from the top shelf. He probably thought talking about maths would make me feel better, or make me care more about statistics. He was half right.

What these evidence-based ideas have in common is important. They don’t tell us who we are, or flatter us. They don’t offer identity or reassurance. They tell us what tends to happen whether we like it or not.

That’s the standard we should be holding AI theories to as well.

What tidy AI theories get wrong — and why it matters

Moravec’s paradox didn’t just misdescribe AI capabilities; it distorted how we respond to them.

On one side, it fed alarmism. If machines are racing ahead on “hard” cognitive tasks, then surely superhuman reasoning must be imminent. Better throw more resource at the problem. Better prepare for a future where AI suddenly leaps beyond us.

On the other side, it also created false comfort. If AI will always struggle with perception, common sense, or the physical world, then there are whole areas we don’t need to worry about. Progress will be slow. Limits will hold.

Both instincts turn out to be unreliable guides. AI — like most technology — hasn’t advanced in neat, human-like stages. It improves unevenly, opportunistically, and often in ways that only become obvious in hindsight. And when we misunderstand what systems are actually good at today, we make poor decisions about where to deploy them, how much to invest, and what trade-offs we’re making.

This is where green AI comes in

Bet you didn’t think this was going to be a blog about sustainability, but here we are.

The point is that we don’t always need to have a grandiose debate about what AI will eventually become. We need to ask a simpler, more practical question: what is this system actually good at, in this context, at this cost?

Smaller, narrower, fit-for-purpose models often deliver more value than giant, general ones — especially once you factor in energy use, infrastructure, and the cumulative impact of deployment at scale. But tidy theories push us toward maximalism: bigger, broader… just in case.

It’s not just compute that’s wasted, but human effort — teams bending processes around tools that were never the right size or shape for the job in the first place. They also distract us from where resource use really happens.

Green AI isn’t about moral righteousness or technological pessimism. It’s about intellectual honesty. When it comes to AI, the cost of believing the wrong story isn’t just confusion.

It’s waste.

* Hans Moravec was a roboticist at Carnegie Mellon in the 1970s and 80s. He was making an observation based on the limits of early robotics, not proposing a universal law. Marvin Minsky — MIT legend and AI pioneer — later helped turn it into A Whole Thing. He also advised Stanley Kubrick on 2001: A Space Odyssey. Which fits, when you think about it.

** Don’t be too hard on Katharine Cook Briggs and Isabel Briggs Myers — we’re the ones who made things weird in the decades that followed. Katharine wasn’t a psychologist and never claimed to be; she was a writer and keen observer of human behaviour, trying to understand why people think and act so differently. Carl Jung’s Psychological Types gave her a framework for helping people — especially families — make practical sense of those differences.

Isabel, meanwhile, was working during the Second World War and worried — rightly — about “misplaced talent”, having seen people waste their strengths when pushed into jobs that drained them. She wanted a sorting aid to better match work to people. I bet she was a pretty decent colleague.